Journey to Becoming an AI Cloud Engineer in 2026

AI didn't kill DevOps — it evolved it. A practical look at what AI Cloud Engineering and AgentOps actually mean in 2026, who's hiring for it, and the skills worth investing in this year.

Engineering deep-dives, hiring intelligence, and career strategy — from practitioners.

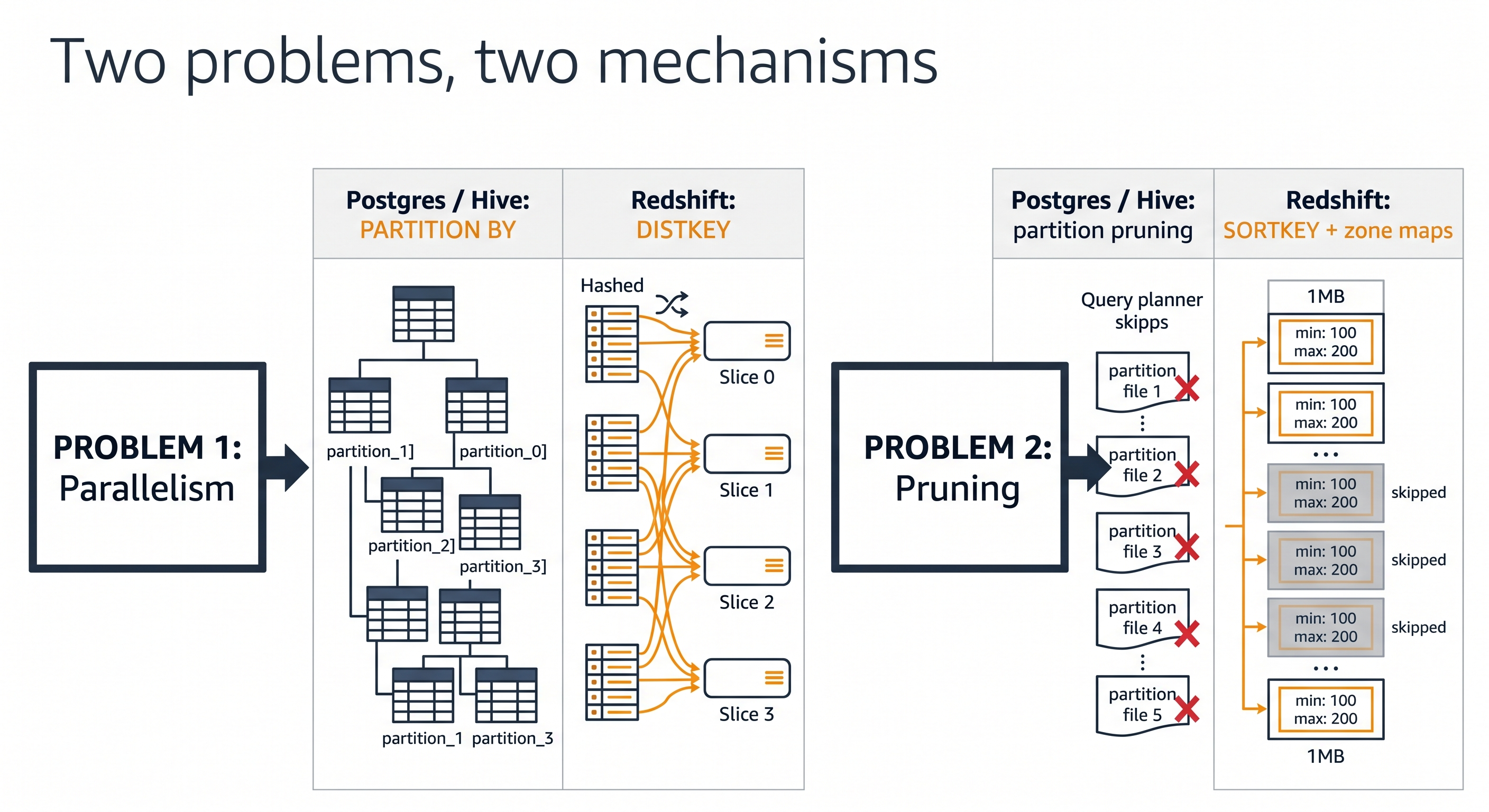

If you're learning AWS Redshift after PostgreSQL or Hive, the missing PARTITION BY clause is confusing. Here's how DISTKEY, SORTKEY, and zone maps replace partitioning in an AWS data lake and data warehouse — with side-by-side SQL examples.

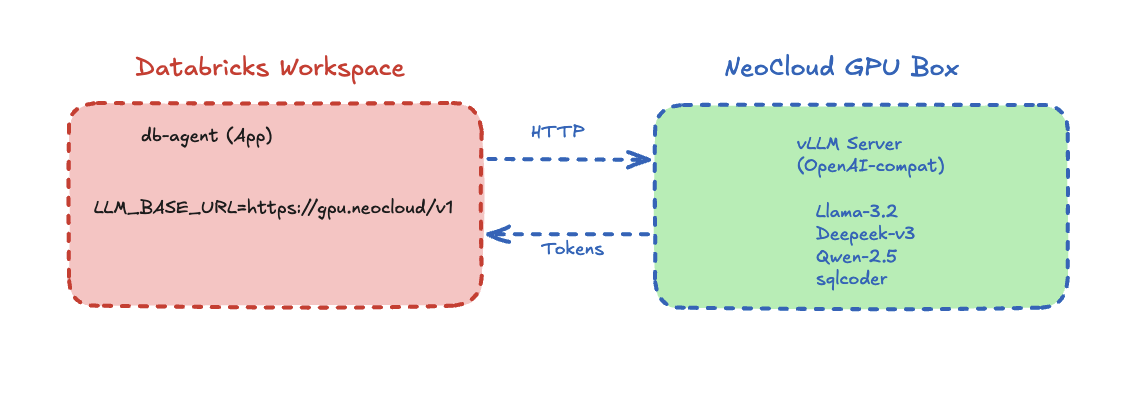

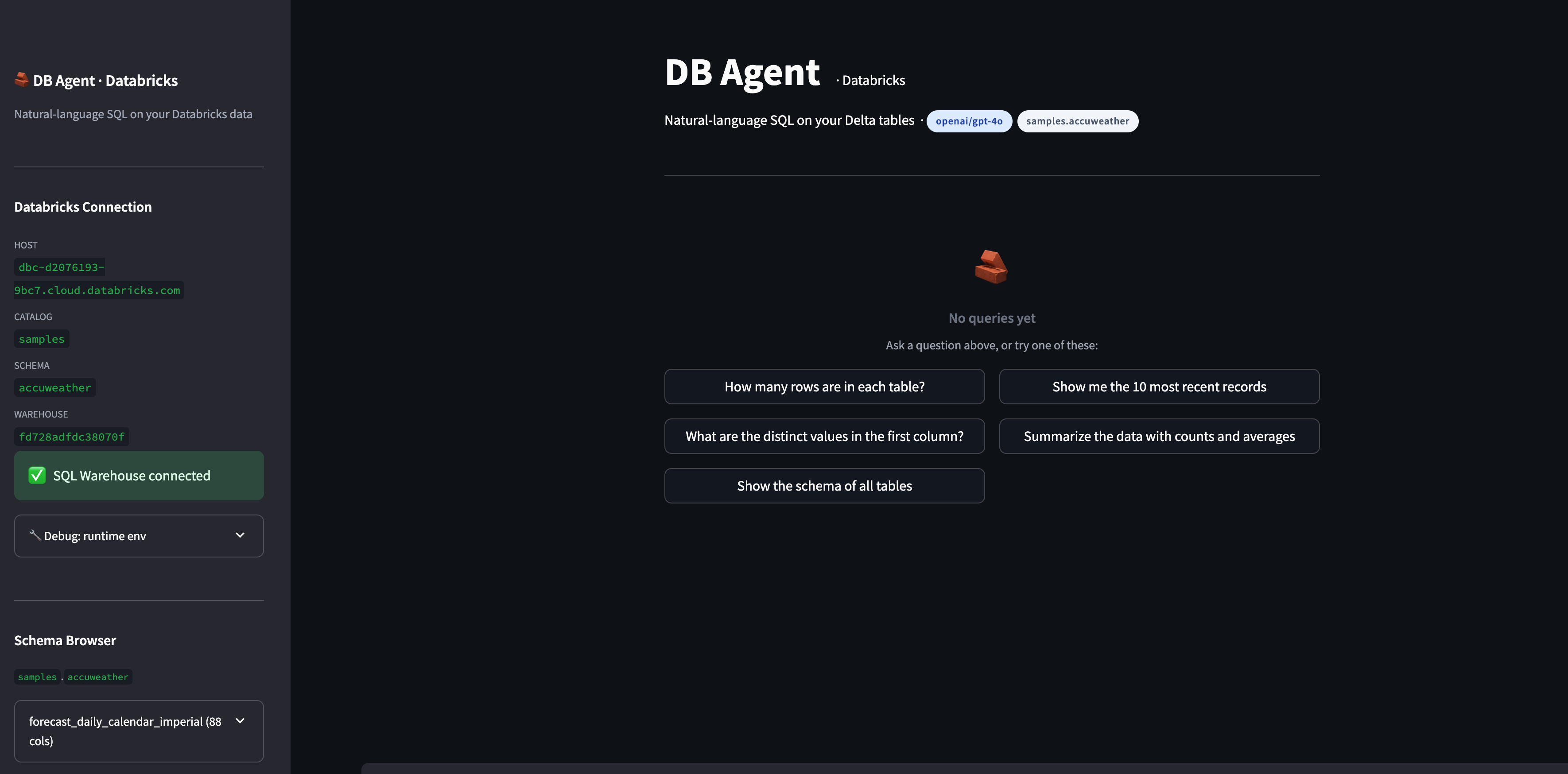

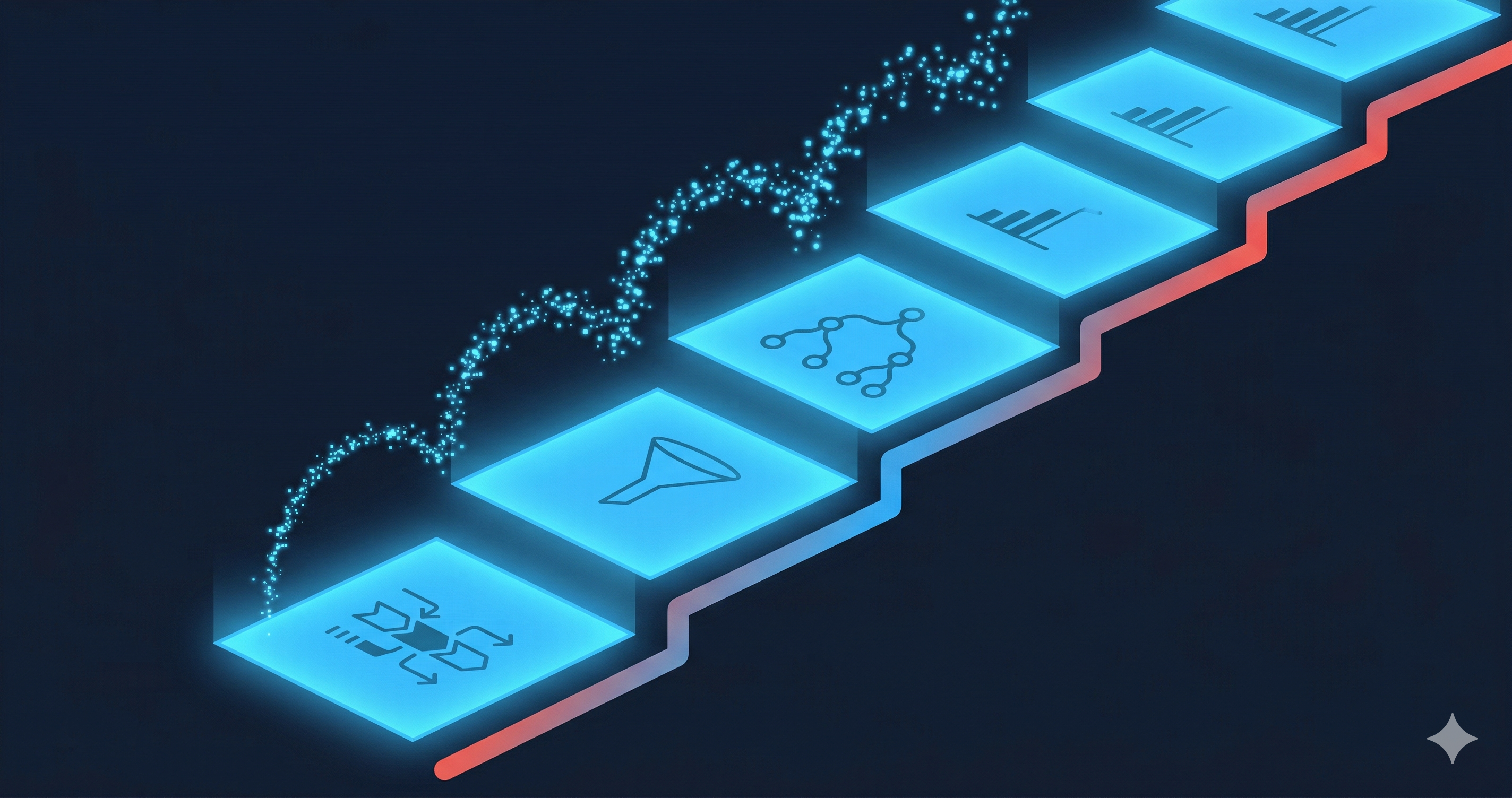

A working reference architecture for a text-to-SQL AI agent that joins Databricks Lakebase (Postgres OLTP) and Unity Catalog Delta (OLAP) through Lakehouse Federation, with a pluggable LLM endpoint that can run on Databricks Model Serving, OpenAI, or self-hosted vLLM on a neo-cloud GPU.

Lessons from teaching 10 people to build a text-to-SQL AI agent on Databricks in 2 hours — including the roadblocks. A guide for any team starting on Databricks.

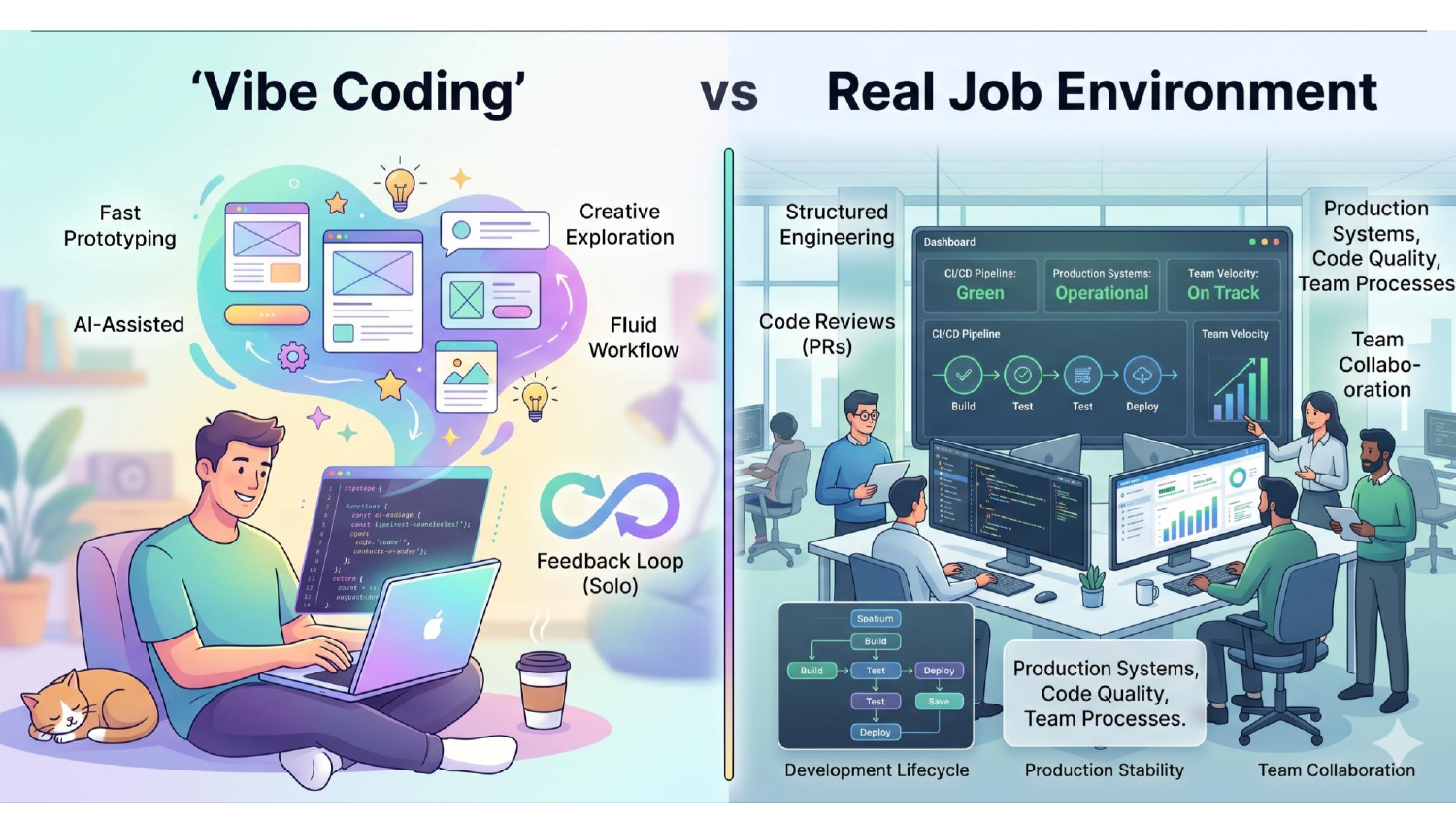

Notes from a live webinar with 40 engineers. AI-coded apps don't land developer jobs — engineering does. Here's what hiring managers in 2026 actually pay for.

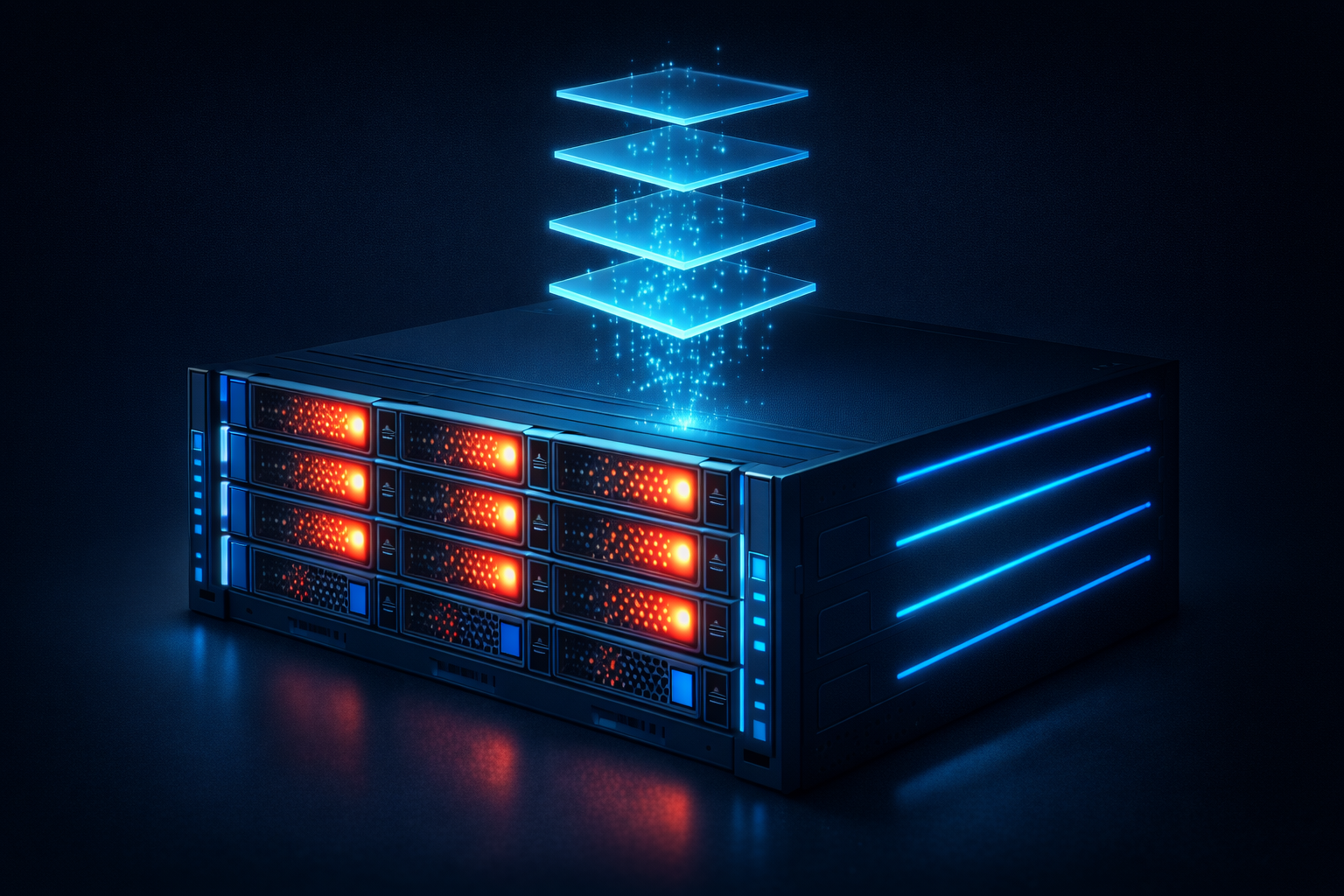

A practical guide to setting up vLLM inference, benchmarking with NVIDIA GenAI-Perf, and building an observability stack using Prometheus and Grafana.

A practical, stackable AI upskilling program covering LLMOps, RAG pipelines, and LLM agent development for enterprise teams.

Technical walkthrough and lessons learned from deploying DeepSeek 3.2 Exp on high-end NVIDIA H200 GPU infrastructure.

How AI-native development tools like All Hands AI, Devstral, and vLLM are rewriting the rules of software development.

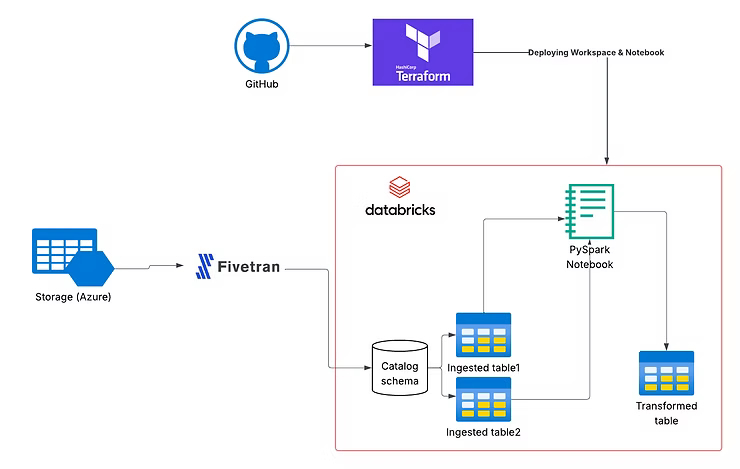

How to apply DevOps principles to Databricks using Terraform for infrastructure and GitHub Actions for CI/CD automation.

Why DevOps and cloud infrastructure are now foundational to AI strategy across every industry.

A structured 60-day roadmap with hands-on projects, certifications, and job search strategies to land your first DevOps role.

A step-by-step guide to automating Databricks deployments using Infrastructure-as-Code — Terraform modules, Spark jobs, and GitHub Actions CI/CD.

Key takeaways from the TorontoAI meetup on resume building, profile optimization, and career guidance for engineers.

Leveraging AWS AI and security services to build intelligent threat detection and automated security response pipelines.

A complete career guide for DevOps engineers navigating the AI-driven transformation of infrastructure and operations.

How observability strategies differ between traditional infrastructure and specialized AI/GPU cloud environments.

Tracing DevOps transformation from manual ClickOps to automated platform engineering and how AI is reshaping the discipline.

A practical guide for recruiters to understand AI roles, evaluate technical skills, and source AI talent effectively.

How to deploy a Python Flask application with a MySQL database backend on Kubernetes.

A step-by-step walkthrough for containerizing and deploying a Django application to a Kubernetes cluster.