Vibe Coding Won't Get You a Developer Job in 2026 (And What Actually Will)

Notes from a live webinar with 40 engineers. AI-coded apps don't land developer jobs — engineering does. Here's what hiring managers in 2026 actually pay for.

The Twitter narrative goes like this: AI will write the code, vibe coders will ship the apps, traditional developers are obsolete. Open Cursor, prompt Claude, push to GitHub, screenshot for LinkedIn, repeat.

Last night I ran a live webinar with 40 engineers — career switchers, fresh grads, IT folks trying to break into development. Most of them had built apps with AI. Many had posted them on LinkedIn. Almost none of them had landed an interview.

That gap — between "I can vibe code an app" and "someone wants to hire me" — is what this post is about.

I've been a software developer for 20 years. I've ridden through cloud migration, big data, the SaaS boom, statistical ML before it was rebranded as AI. Every wave brings the same headline: "developers are about to be obsolete." And every wave, in reality, makes the work more complex, not less. AI is the same. The difference is that this time, a lot of people are betting their careers on the headline instead of the reality.

Here's what I've actually seen — from running bootcamps, placing students at Toronto-based companies, and shipping AI-assisted apps myself.

Why people who can code aren't getting interviews

When I asked the audience to describe their job-search experience, the same answer kept coming back: "I'm building projects but no one is calling me back."

Here's why, in four lines I put on a slide:

- You can generate code

- But you can't explain it

- You've never deployed anything real

- You don't understand systems

This isn't about laziness or talent. It's about a category error. Vibe coding gets you a working artifact. Hiring managers buy something different.

A business hires you for one of two reasons: to make them money or save them money. There is no third reason. If your skill doesn't connect to either of those goals, you're invisible to the hiring funnel — no matter how clean your prompts are or how cleverly you used Claude.

The toy app you posted on LinkedIn? It makes nobody money. It saves nobody time. It's a portfolio piece for a portfolio that doesn't exist yet. That's the problem.

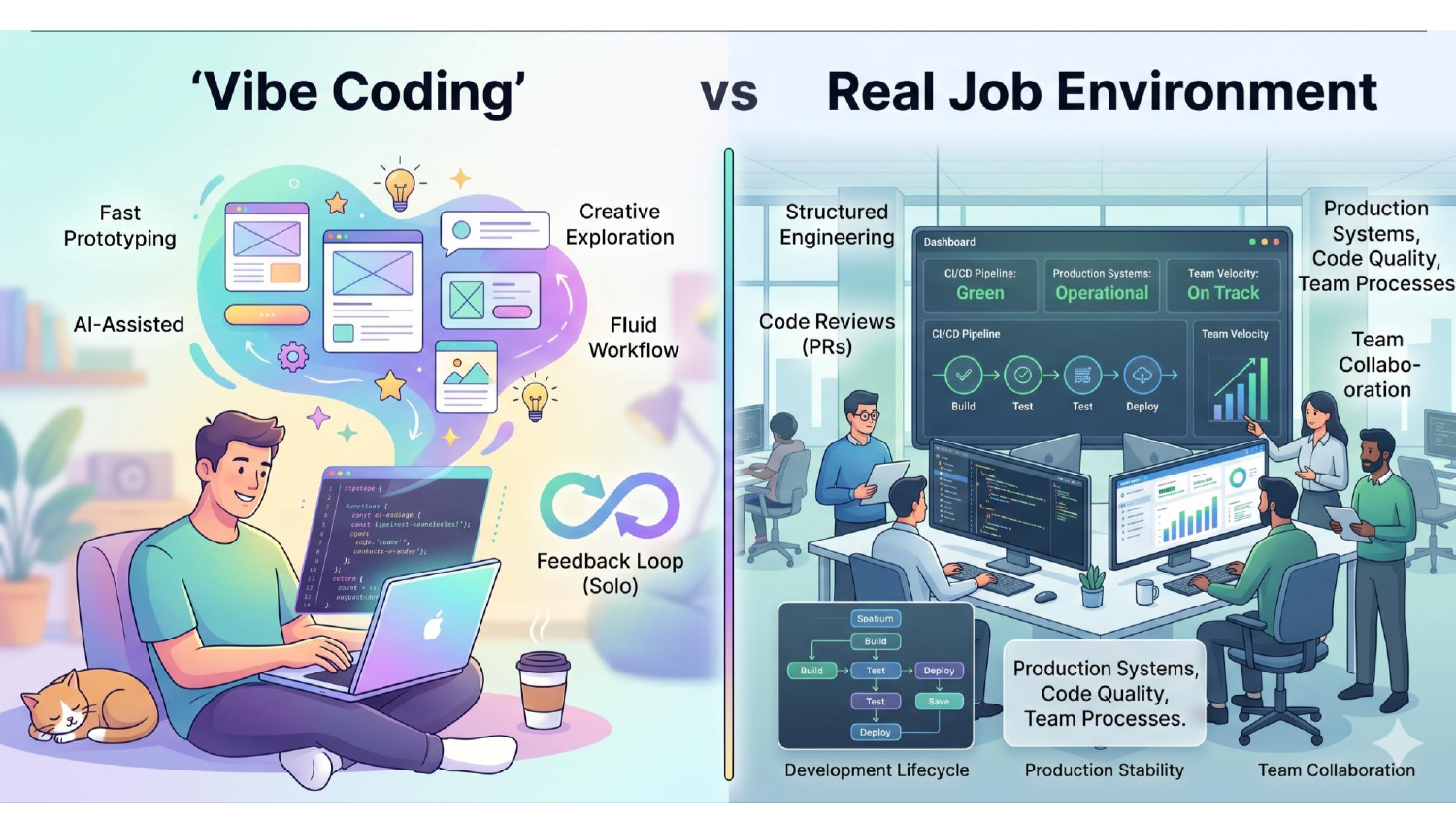

What "vibe coding" actually is — and where it stops working

Andrej Karpathy coined the term. The idea is that with AI tools good enough, you don't think in syntax — you think in functional logic and let the model handle the translation. Prompt → AI generates code → developer reviews → iterate.

It works. I do it every day. The bootcamp app I demoed last night was vibe coded. The website I run is vibe coded. This isn't an anti-AI post.

The problem isn't vibe coding. The problem is vibe coding without the engineering layer underneath.

Look at the visual above — the production car next to the vibe-coded one. Both are technically "cars." The vibe-coded one has exposed wires, no headlights, no steering wheel, glitchy interface. It moves. It also kills you on the highway.

Enterprises know this distinction intuitively, even if they don't articulate it. They are not buying "moves." They're buying "runs in production for ten years without taking the company down."

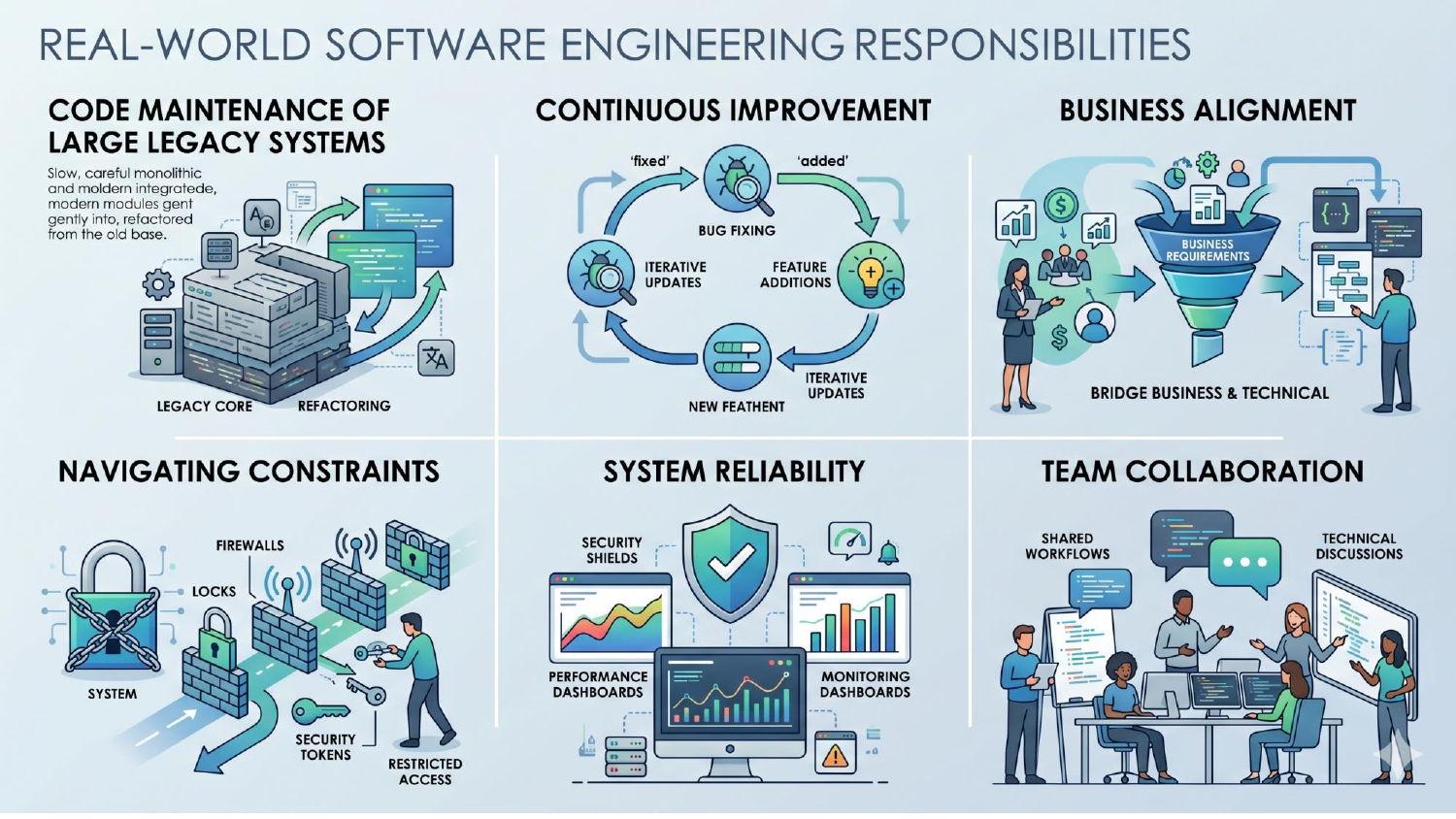

What companies actually expect

Here's what real engineering work looks like inside a company — bank, insurance, manufacturing, anywhere with a real revenue line:

1. Code maintenance of large legacy systems. You are never starting on a blank canvas. You're working in a codebase that's older than your career, written by people who've left, with conventions that nobody documented.

2. Continuous improvement. Bug fixes, feature additions, iterative updates. The code is alive, and your job is to keep it that way.

3. Business alignment. You translate business asks into technical work. JIRA tickets, product managers, stakeholder calls. If you can't have that conversation, you can't function.

4. Navigating constraints. Locked-down laptops, firewalls, restricted access, compliance requirements. The cool thing you'd do at home is forbidden by IT for reasons that took the security team years to learn the hard way.

5. System reliability. Security, monitoring, performance, observability. Deploying is the easy 5%. Knowing when your system is broken at 3 AM and being able to fix it — that's the 95%.

6. Team collaboration. Pull requests, code reviews, disagreement. Silicon Valley has a saying: don't work with a jerk. People will tolerate someone slightly less skilled. Almost no one tolerates someone who burns down team trust.

None of this is solvable by prompting harder.

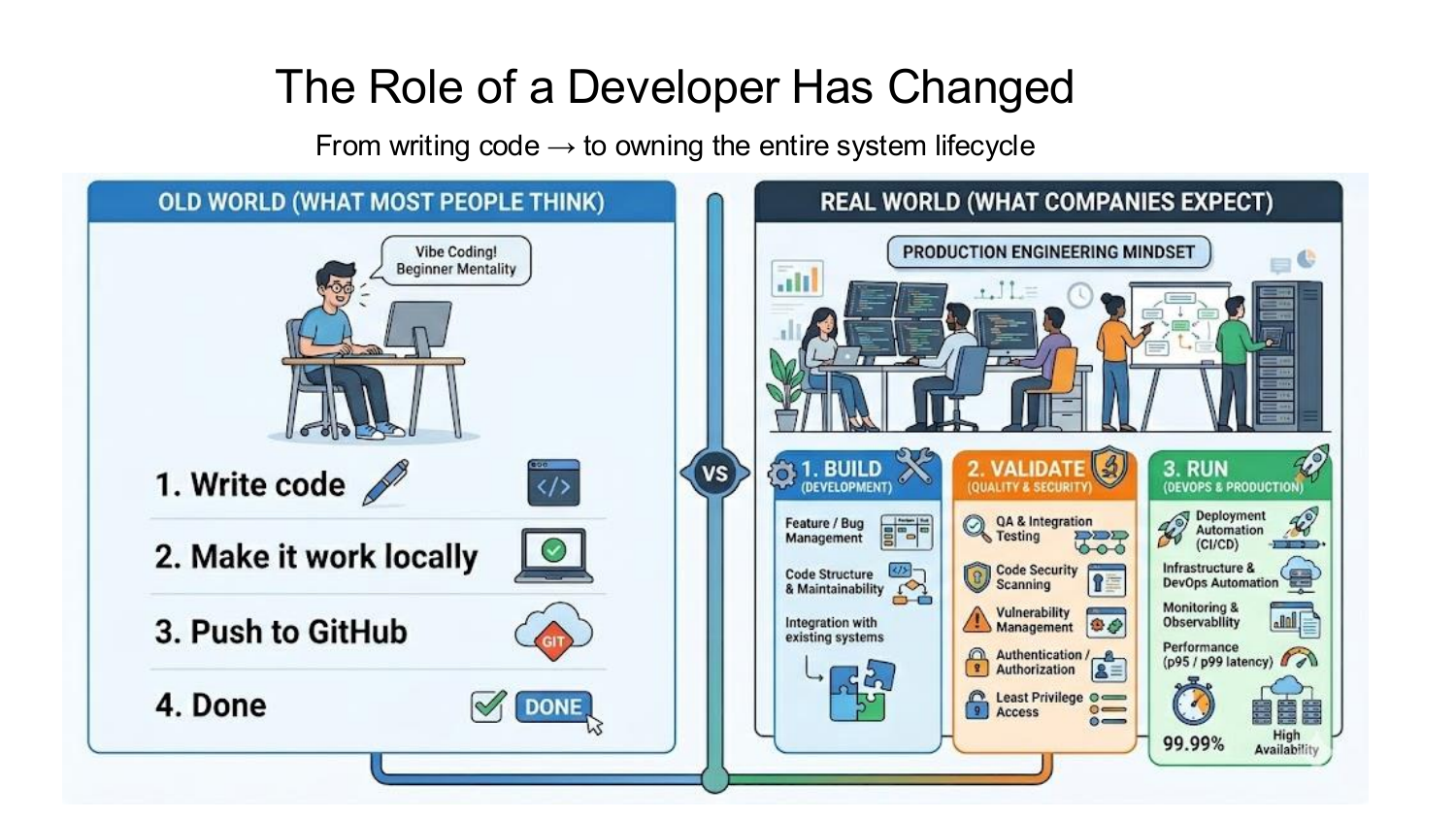

The developer role has tripled — and that's the actual reason hiring is hard

Five years ago, a software team had specialized roles: a developer, a QA engineer, a DevOps engineer, an SRE. They'd ship together.

That world is over. Today, hiring managers expect one person to:

- Build — write the feature, integrate with existing systems, maintain code quality.

- Validate — write tests (often before the code, via TDD), run security scans, check for vulnerabilities.

- Run — deploy with CI/CD, monitor in production, respond to alerts.

AI didn't eliminate jobs. It compressed three jobs into one seat. The expectation isn't lower — it's higher. And companies are willing to pay senior salaries to the person who can do all three, while passing on the person who can only generate React components from prompts.

This is also why salaries for "AI-native" hobbyist developers aren't going up. The hot money is on the engineer who can ship a production-grade microservice, write its CI/CD pipeline, scan it for CVEs, and explain it in a code review — using AI as a multiplier, not a substitute.

A concrete story: the bookmark app

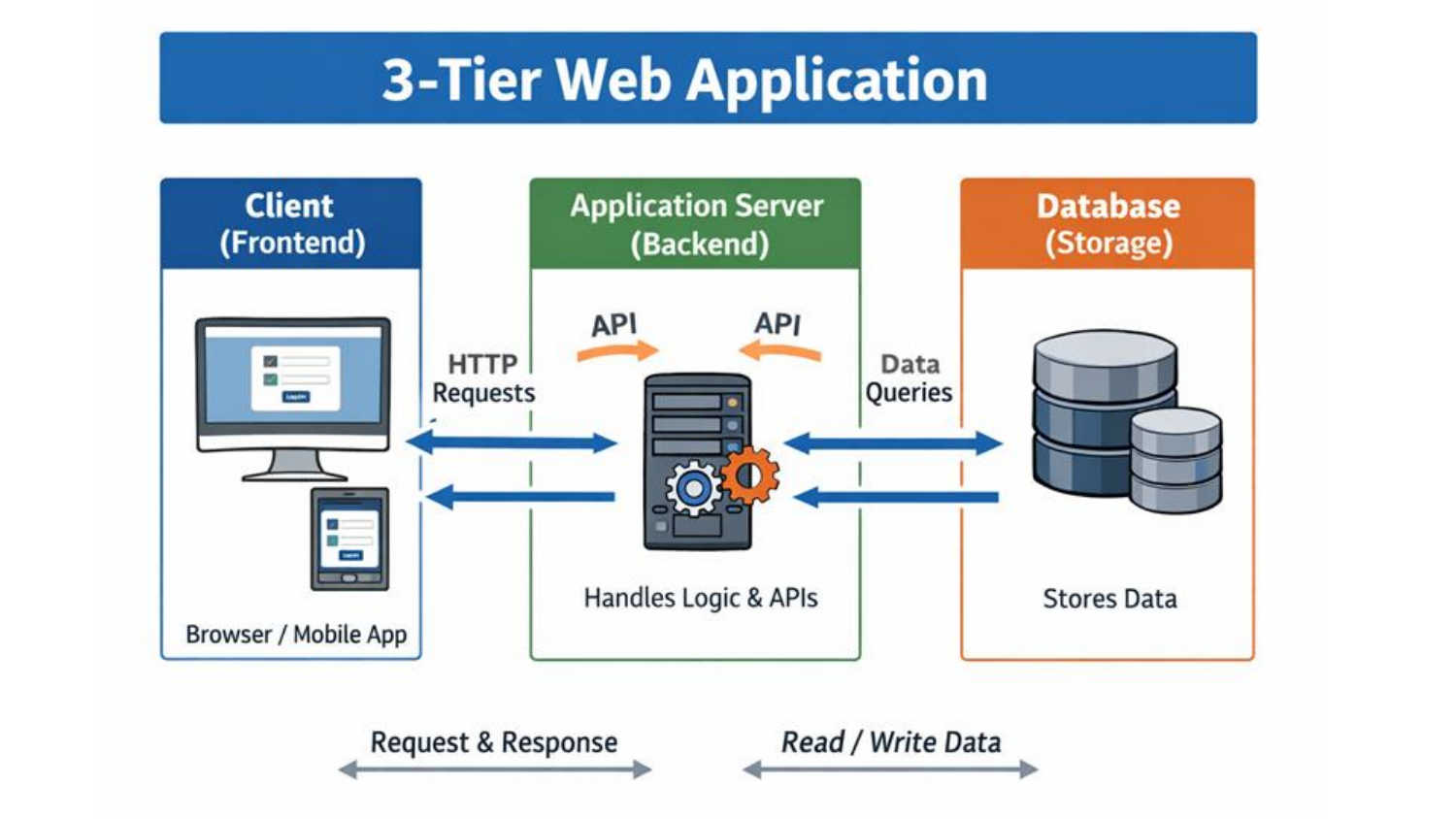

To make this real, I demoed a small project on the webinar — a personal URL bookmark saver. Architecture: Next.js on the front end, AWS Lambda on the back end, DynamoDB for storage, Terraform for infrastructure, GitHub Actions for CI/CD.

The code itself? AI wrote 90% of it. Single prompt for the Next.js scaffolding. Single prompt for the Lambda handlers. The CRUD logic was done in minutes.

The other 10% took two days.

Here's what AI couldn't do well:

- The IAM policy. Lambda needs the right permissions to write to DynamoDB and read from S3. Wrong policy, silent failure. The model generates plausible IAM JSON. Plausible is not correct.

- The architecture decision. "Should I use Lambda or EC2 for this app?" depends on traffic patterns, cost, and cold-start tolerance. AI will give you a confident answer, but a wrong one if it doesn't have your context.

- The missing pieces. I asked Claude to deploy the stack. It generated Terraform for Next.js + Lambda + DynamoDB. It forgot the API Gateway. It forgot the S3 bucket for static assets. Lambda doesn't work without an invocation source. AI doesn't always know what it's missing.

- The live deploy that failed on stage. During the webinar, I ran the GitHub Actions deploy live. It failed. Why? A Tailwind module wasn't installed in the front-end build. AI didn't catch it. I had to read the error, trace it to the build step, and fix it.

That last failure is the most important moment of the webinar, and I planned for it loosely. Deploying is easy. Debugging a broken deploy under time pressure is the actual job. That is what hiring managers test for. That is what AI will not do for you.

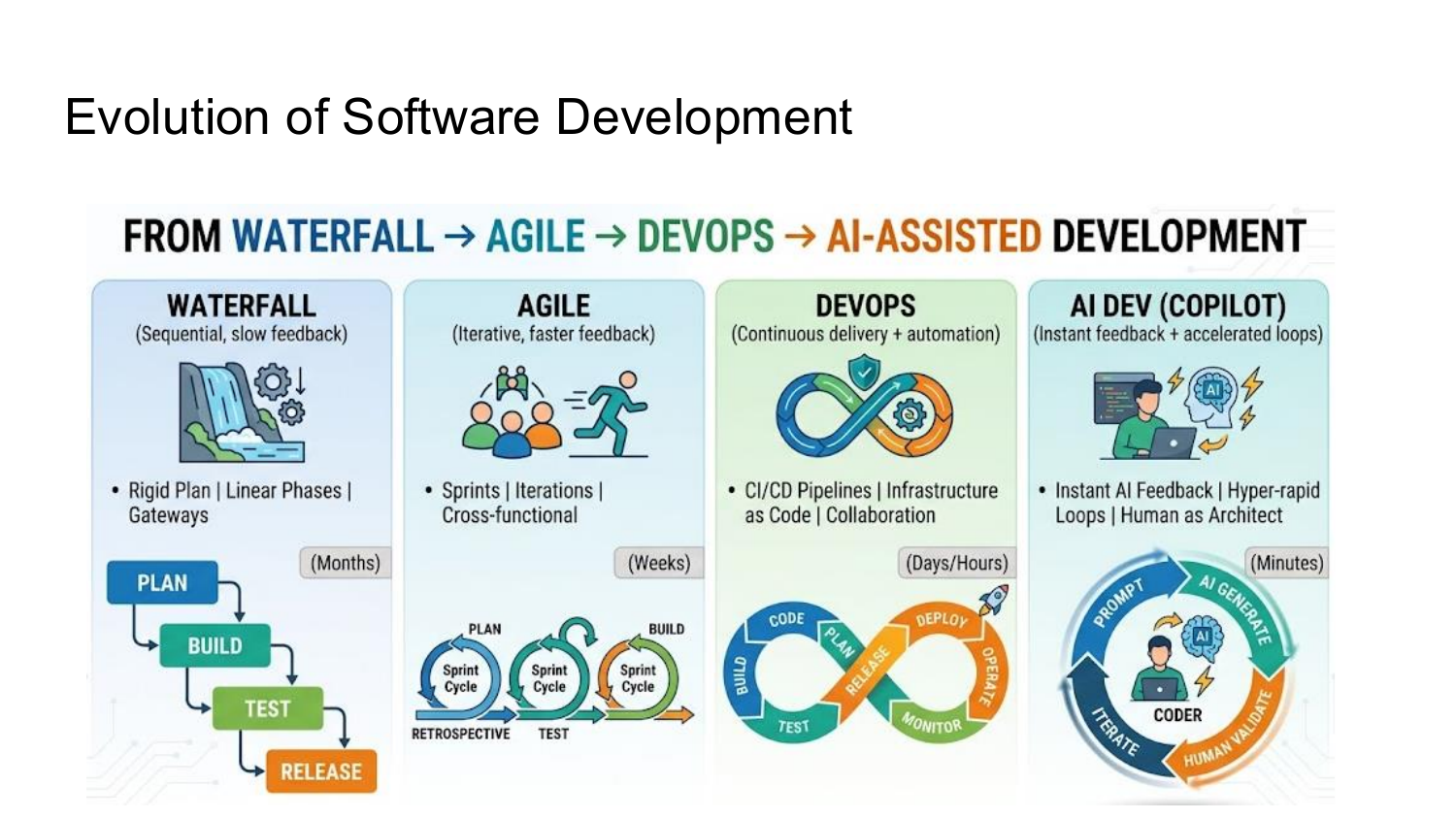

Why now? The evolution of software development

The reason this matters in 2026 specifically — not 2024, not 2027 — is where we are in the cycle.

The release cadence has compressed at every wave:

- Waterfall (2000s): months per release. I wrote my first production code in 2005. We had six-month gates between features.

- Agile (2010s): two-to-four-week sprints.

- DevOps (late 2010s): continuous delivery, multiple deploys per day.

- AI-assisted development (now): minutes from prompt to running code.

When the cycle compresses, the work doesn't disappear — it migrates. In the AI-Dev cycle, the human is still architect, validator, and decision-maker. We just hold a much sharper tool.

The skills that compressed away — typing speed, syntax memorization, boilerplate — are not what people get hired for anymore. The skills that survive — system design, security thinking, debugging under constraint, business judgment — are what hiring managers are paying for.

Most "vibe coding" portfolios are training the wrong muscle for this market.

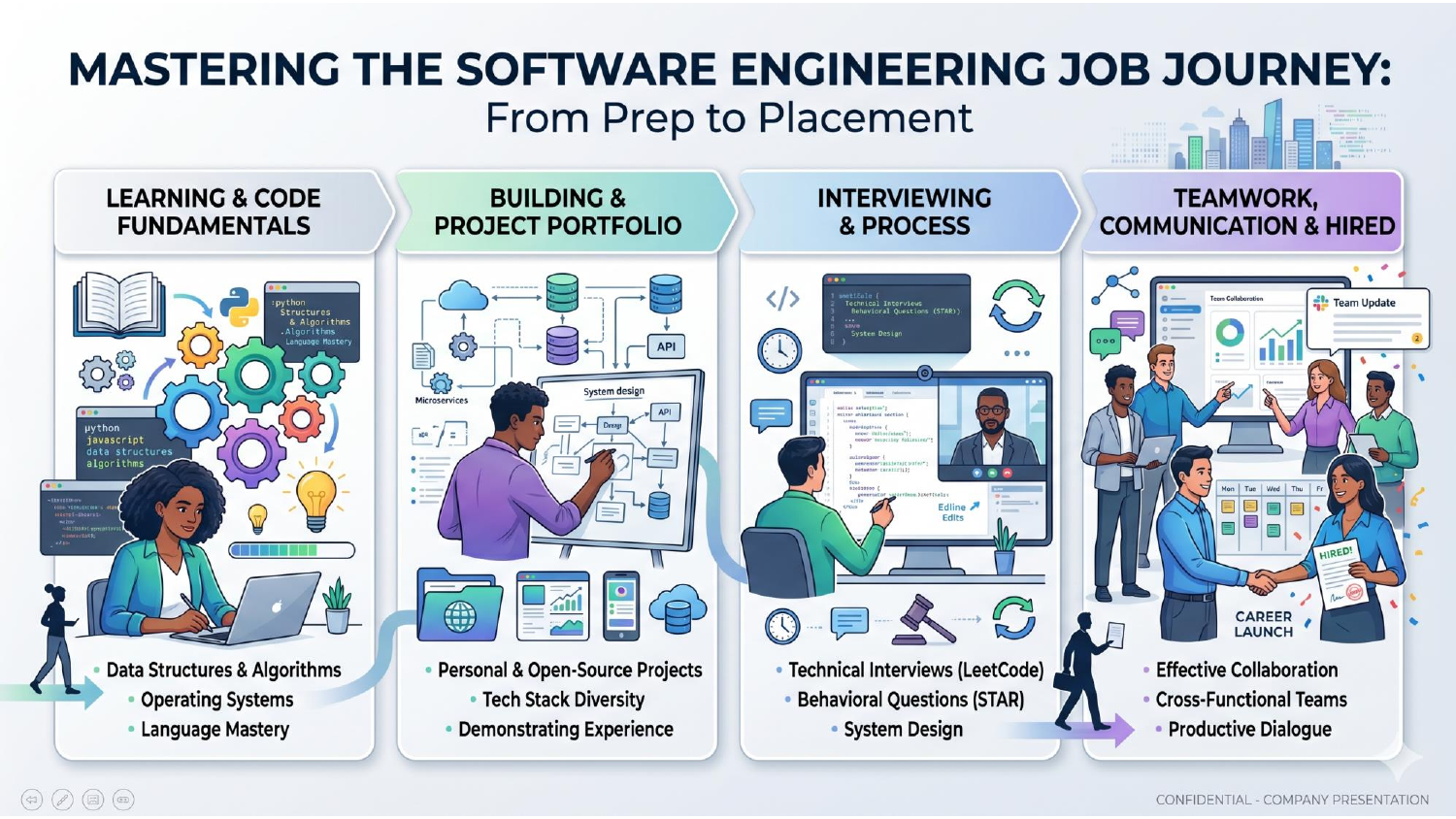

So what do you actually do?

If you're reading this and thinking "okay, but I still need a job," here's the path I walked the bootcamp through:

1. Code fundamentals. You don't need to grind LeetCode for six months. You do need to know what a tuple is, what HTTP layers are, what a 401 vs 501 means, what JWT does and why it matters. AI can help you when you can ask the right question. You can't ask the right question if you don't know the basics.

2. A portfolio that maps to real business problems. Not a to-do app. Not another notes app. Pick something that resembles what an employer in your region actually pays for. East coast — banks, insurance, supply chain. Build something in that domain. Use the platform that domain hires for: AWS, Azure, Databricks, Kafka. "Full stack developer" is a million-person keyword. "Full stack developer who's shipped on Azure + Databricks" is three recruiters in your inbox this week.

3. The interview process — the same as it ever was. Companies are still doing coding interviews, and increasingly with proctoring software that detects AI use. Practice on LeetCode or HackerRank — not for the sake of competitive programming, but because you need to think out loud in front of a stranger under time pressure without panicking. That muscle is real.

4. Effective collaboration. Agile, code reviews, pull requests, communication with non-engineers. Hard to fake, easy to test for, and the thing junior candidates most often lose offers on.

Closing

The narrative that AI is replacing developers is mostly written by people who do not develop software for a living. They write articles for ad revenue. The real economy looks different: AI is a force multiplier for engineers who already understand systems. It is a dead end for people trying to skip the systems part.

If your goal is a job — a salary that pays your rent and lets you stop scrolling job boards — vibe coding is half the equation. The other half is engineering: legacy code, IAM policies, deploys that fail at 4:55 PM on a Friday, and the patient skill of figuring out why.

Build that half. The market is paying for it.

If you want to go through this end-to-end, I run a 5-day live Full Stack AI Bootcamp where we build and deploy a real full-stack AI app together (FastAPI, React, MongoDB, AWS, CI/CD). You walk out with a deployed project, a real GitHub history, and the engineering vocabulary interviewers test for. Cohorts are kept small.

Cohort dates, pricing, and full curriculum: becloudready.com/programs/full-stack-ai-bootcamp

— Chandan Kumar, Founder, beCloudReady