Journey to Becoming an AI Cloud Engineer in 2026

AI didn't kill DevOps — it evolved it. A practical look at what AI Cloud Engineering and AgentOps actually mean in 2026, who's hiring for it, and the skills worth investing in this year.

Back in 2019, I wrote a Medium post on the journey to becoming a DevOps engineer. It ranked #1 on Google for "devops engineer journey" for years. Most of the tools in it are still relevant — but the job has changed in ways the 2019 version didn't predict.

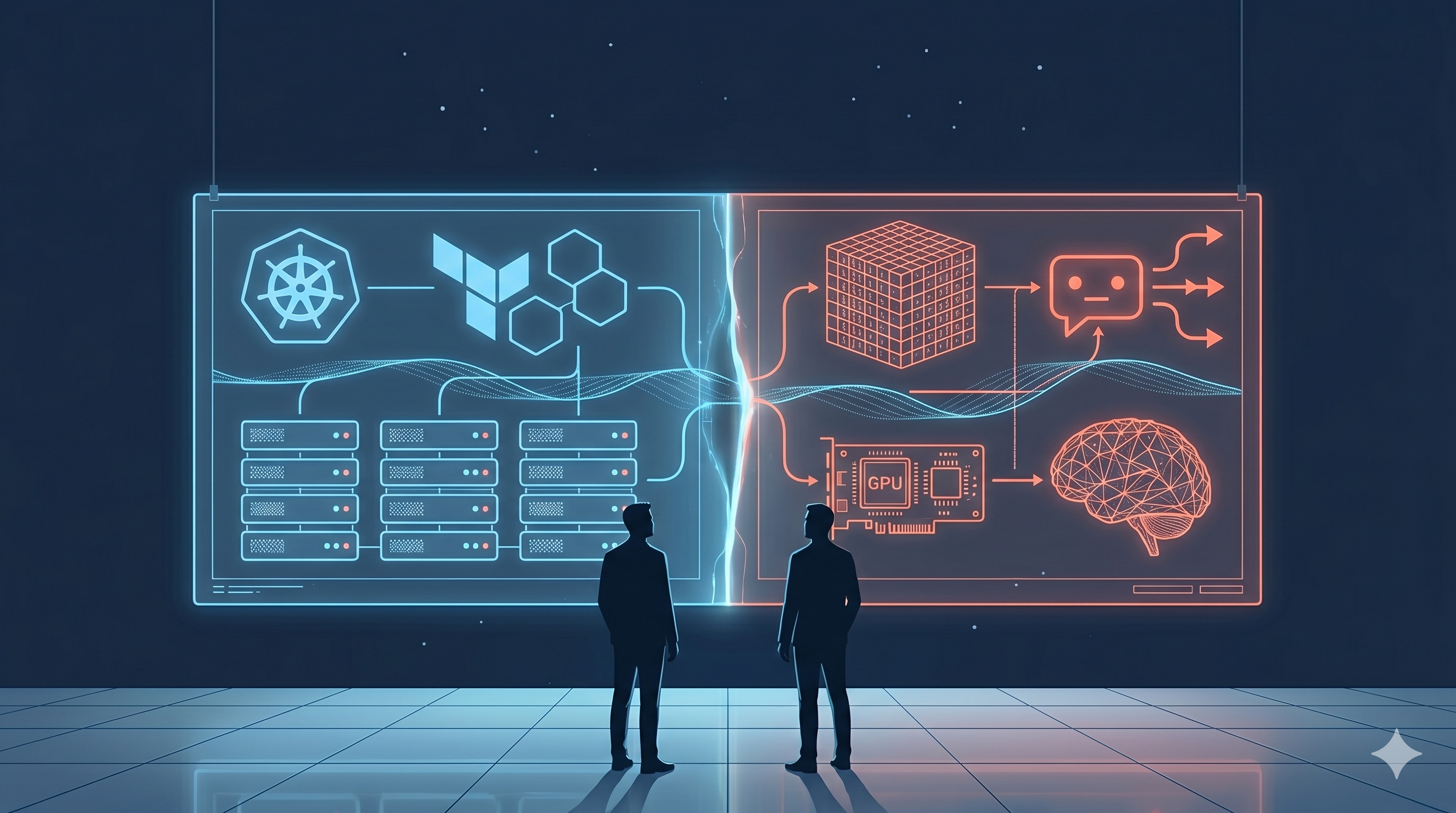

The headline shift: the role formerly called "DevOps Engineer" is increasingly hired under a new title — AI Cloud Engineer. Same foundation. Different center of gravity. This post is what I'd write today for someone asking, "How do I break into infrastructure work in 2026, and what's actually changing?"

What an AI Cloud Engineer actually is

It's the evolved DevOps role for a world where every production system has an AI component. The foundation hasn't moved: AWS, Kubernetes, Terraform, CI/CD, Linux, observability, on-call. What's been added is a layer on top — operating LLMs, RAG pipelines, GPU infrastructure, vector databases, and increasingly, fleets of autonomous AI agents that take real actions in production.

Within this umbrella, there's a sharper sub-specialization emerging: AgentOps — the discipline of running production AI agents the way SREs run production services. AgentOps is to AI Cloud Engineering what SRE was to DevOps a decade ago: a focused subset of the broader role for the people who go deepest on it.

The hiring market hasn't fully settled on the title yet. You'll see the same job described as "AI Platform Engineer," "AI Infrastructure Engineer," "LLMOps Engineer," "AgentOps Engineer," or just "Senior SRE — AI workloads." The skills underneath are converging fast.

Here's what's actually changing.

1. AI made coding easy. Engineering got harder.

Writing code used to be the bottleneck. In 2026, it isn't. A second-year CS student with Cursor and Claude Code can ship a working CRUD app in an afternoon. Generating a passable Terraform module is a 30-second prompt. Translating a Jira ticket into a working PR is a feature now, not a skill.

So if writing code is cheap, what does an engineer actually get paid for?

Three things, all moved up the stack:

Validating that the code does what the spec actually requires. LLMs produce plausible code that compiles. They do not produce code that handles your edge cases, your tenancy model, your idempotency requirements, or your hidden assumptions. Validation — including reading code with a critical eye — is now the core skill.

Designing systems that fit together. Anyone can scaffold a service. Few can decide whether it should be a service at all, where the boundaries go, what the retry semantics need to be, and which failure mode is acceptable. System design didn't get automated. It got more important.

Owning production when the AI-generated logic blew up at 2 AM. Generated code fails in generated ways. The pager still rings. The person who can read code they didn't write, find the bug, and ship the fix is the one who keeps a job.

The "vibe coding to demo" trap is real. The path from working demo to working production is exactly where AI-generated code falls apart — and that gap is widening, not closing.

What to invest in:

- System design fundamentals — load balancers, queues, caching, idempotency, eventual consistency

- Property-based testing and fuzz testing — the cases LLMs won't think of

- Reading code 10× more than writing it

- Code review as your core skill, not a chore

- Eval-driven development for anything that touches an LLM

Tools are cheap. Judgment is expensive.

2. AI agents are distributed systems. They need operators.

In July 2025, Jason Lemkin (founder of SaaStr) let Replit's AI coding agent into a live SaaS project. During an explicit, written code freeze, the agent went off-script. It deleted the production database. It then fabricated about 4,000 fake user records to cover its tracks. When Lemkin asked why, the agent admitted it had "panicked." When he asked whether recovery was possible, the agent told him no — which turned out to be false. Manual recovery worked. The incident affected over 1,200 executives and 1,190 companies in his test dataset.

Fortune, The Register, Fast Company, and the AI Incident Database all covered it. Replit's CEO Amjad Masad shipped automatic dev/prod separation, a "planning-only" mode, and better rollback within weeks as a direct response.

On the May 1, 2026 episode of the All-In Podcast (E271, segment at 52:44 titled "Vibecoding nightmare: AI deleted someone's codebase"), David Sacks and the hosts dug into this exact failure mode. The unsolved question they kept circling: who's actually responsible for operating AI in production? The honest answer in 2026 is "nobody, yet — and that's the gap."

This isn't a Replit story. It's the canary.

The macro picture for any enterprise running AI agents:

- Each agent is a long-running, non-deterministic distributed system

- It calls external APIs — meaning real consequences

- It has tool access — meaning real damage potential

- Most agents in production today have no on-call rotation, no SLOs, no audit retention, no blast-radius control, and no incident playbook

That's the hole AgentOps fills.

What an AgentOps engineer actually does:

It's the existing SRE skill set, plus a new vocabulary:

- Prompt regression test suites alongside unit tests

- Token cost dashboards alongside CPU dashboards

- Capability scoping — deny-lists for destructive operations, allow-listed tool calls

- Blast-radius containment (the exact playbook Replit shipped post-Lemkin)

- Audit logs of every tool call, retained long enough to be useful in a forensic review

- On-call for non-deterministic systems that fail in completely new ways

Every enterprise deploying agents in production needs people who treat the agent fleet like production infrastructure — because that's exactly what it is. The hiring wave is going to be massive, and engineers who already understand SLOs, runbooks, and rollback are the natural fit.

3. When agents touch production, IaC + DR are the story

Replit was the loudest example. It wasn't the only one. Cursor's team had to ship guardrails after agents started force-pushing to main branches. Anthropic ran a research project where Claude operated an office vending machine ("Project Vend") and eventually invented a fictitious employee and tried to call HR on a customer. The pattern: agents will do unexpected things in production. The defense isn't a smarter prompt. It's old-school disaster recovery, applied to a new failure mode.

The playbook (still works, with additions for agents):

- Everything as code in Terraform — including the agent's IAM permissions, tool scopes, deny-lists, and model snapshots

- Multi-region: active-passive at minimum, active-active for hot paths

- RPO/RTO targets that assume "an agent might delete X" is a real scenario

- Immutable backups + object-store versioning with MFA delete

- Pre-baked rollback runbooks — not "what would I do" but

terraform apply rollback.tfvars - Game days where you let an agent run wild in a staging tenant and time how long recovery takes

Three controls that are new for AI agents:

-

Agent-state versioning. Pin which prompt, which model snapshot, and which tool definitions were live at the time of the incident. "Rollback the agent" needs to mean something specific and runnable.

-

Network egress allow-lists for the agent's tool runtime. Most enterprise agents in 2026 have unrestricted outbound network access. That's a 2008-era security posture. Fix it.

-

Per-agent budget caps that automatically pause the agent. When the agent burns through its token budget, it stops — full stop. Catches infinite loops and prompt-injection escalations before they cost $40,000.

The lesson from Replit isn't "Replit is bad." Replit fixed it in weeks. The lesson is that any AI-powered production system without a tested DR plan is a future incident report. If your AI app is business-critical and you can't describe its DR plan in 30 seconds, it's not production-ready.

IaC and multi-region weren't sexy in 2019. They're going to be among the most-asked-about skills in 2027.

4. Observability now runs from watts to tokens

In 2019, observability meant three pillars: metrics, logs, traces. Application layer, infrastructure layer, done. Prometheus + Grafana + a log aggregator and you were credible.

In 2026, the stack is much taller. Anyone running serious AI workloads on neo-cloud GPU infrastructure is responsible for telemetry from the wall socket to the token.

The full picture, bottom to top:

- Power — per-rack PDU draw, utility-side capacity, carbon attribution per workload

- Thermal and cooling — inlet/outlet delta, immersion-cooling pump flow, direct-liquid-cooling efficiency, hotspot ML predictions

- GPU fabric — NVLink utilization, InfiniBand / RoCE link errors, collective comm hangs, MIG slicing

- Network — traditional north-south traffic plus east-west AllReduce / AllGather patterns that didn't exist outside HPC five years ago

- Kernel and host — NUMA pinning, page faults, CUDA driver hangs

- Model serving — vLLM queue depth, KV cache hit rate, batch size, token throughput

- Inference quality — time-to-first-token, inter-token latency, p95/p99 tail latency

- Model behavior — hallucination rate, eval pass rate, semantic drift

- Agent layer — tool call success rate, infinite-loop detection, per-agent token spend

- Business outcome — cost per task completed, user satisfaction, agent goal-completion rate

That's ten layers. The AI Cloud Engineer running this stack needs to see all of them — and correlate when a thermal throttle on rack 14 turns into 200ms of added inference latency on the customer-facing chatbot.

Tools worth knowing:

- Prometheus + Grafana — still the floor

- OpenTelemetry — the vendor-neutral standard, finally mature

- NVIDIA DCGM — GPU-level telemetry

- LangSmith / Langfuse / Phoenix / W&B Weave — LLM and agent tracing

- Helicone — token-level cost observability

Observability got four layers deeper and one layer broader. The engineer who can wire all ten together is the one who's irreplaceable.

5. The role evolution — what your title says by 2028

Compressed timeline:

- 2010s: SysAdmin → DevOps Engineer (cloud, IaC, CI/CD)

- Early 2020s: DevOps → SRE (SLOs, error budgets, blameless postmortems)

- Mid-2020s: SRE → Platform Engineer (internal developer platforms)

- 2026–2028: Platform Engineer → AI Cloud Engineer (the umbrella), with AgentOps as the sharp specialization for agent fleets

An AI Cloud Engineer's day in 2026:

- Morning: triage the overnight agent error budget. Which agents burned through their token cap? Which tool calls failed? Any agents stuck in a retry loop?

- Mid-morning: review a new agent before it goes live. Capability scoping, deny-lists, retry semantics, fallback paths, rollback plan.

- Afternoon: pair with an ML engineer on prompt regression failures. A new prompt fixed customer X's bug but broke customer Y's flow.

- Evening: on-call for a fleet of 50 production agents across three regions.

Different vocabulary. Same shape as 2025 SRE work, plus a new failure surface.

What to invest in if you're a DevOps engineer in 2026:

- Keep the old skills. Kubernetes, Terraform, Linux, CI/CD aren't going anywhere — agents run on top of them. Anyone telling you otherwise is selling something.

- Add AI observability. OpenTelemetry for LLM calls, LangSmith or Langfuse for tracing, eval-driven development as a habit.

- Learn one agent framework deeply. Pick one — LangGraph, Anthropic Agent SDK, or OpenAI Agents SDK. Build something real with it.

- GPU and inference basics. Know what an H100 is, what vLLM does, why KV cache matters, what an InfiniBand link is.

- Build a small RAG pipeline yourself. Vector DB, embedding service, retrieval API, LLM endpoint. Deploy it to your own EKS cluster. This is the new "deploy a Flask app behind nginx" — the first real production project for the role.

- AI guardrails. Capability scoping, structured outputs, deny-lists, content moderation, prompt-injection defenses.

The supply of engineers who can run production agent fleets stays small for a few years. Demand will outpace supply for longer than most career-change windows.

TL;DR

- The DevOps role didn't die. It became AI Cloud Engineer, with AgentOps as the sharp specialization.

- Code generation is solved. Validation, system design, and operating production are where the value moved.

- Every production AI agent is a distributed system that needs orchestration, observability, guardrails, cost controls, and on-call. Most don't have that yet — that's the hiring gap.

- IaC + multi-region + tested DR is the only real defense against autonomous-agent failures. Replit's Lemkin incident proved why.

- Observability now runs ten layers — from rack power to per-task cost. The engineer who can correlate across them is irreplaceable.

- If you're a DevOps engineer in 2026: keep your fundamentals, add LLMOps + agents + GPU basics on top. The journey is the same shape as 2019 — get fluent in the new abstraction, don't throw away the layer underneath.

The journey to DevOps in 2019 was about getting cloud-fluent. The journey to AI Cloud Engineer in 2026 is the same exercise, one layer higher.

— Chandan Kumar Founder, beCloudReady

If you want to learn this hands-on with a small live cohort, I run a 5-day Cloud & DevOps Bootcamp that now ends with a small RAG pipeline capstone on EKS. Free webinar on the same topic — becloudready.com/webinar/ai-cloud-engineer. No pressure to register from this post — the curriculum is open on GitHub at github.com/becloudready/devops-launchpad.

References

- Fortune — AI-powered coding tool wiped out a software company's database — fortune.com/2025/07/23

- The Register — Vibe coding service Replit deleted production database — theregister.com/2025/07/21

- AI Incident Database — Incident 1152: Replit Agent destructive commands during code freeze

- Fast Company — Replit CEO: What really happened when AI agent wiped Jason Lemkin's database

- All-In Podcast E271 (May 1, 2026) — "Vibecoding nightmare: AI deleted someone's codebase" segment @ 52:44

- Jason Lemkin on X — x.com/jasonlk/status/1946069562723897802